Obsessions

That One Bug

Most experienced game programmers have a story about That One Bug; A war story they tell to amaze, amuse, or impress other game programmers, often over drinks at conferences or other meet-ups. They’re our industry’s equivalent of a fisherman’s “The One That Got Away” stories.

I’ve had a few of these “That One Bug” stories over my time in the industry. Perhaps the first one was a crash in the PlayStation 2 version of Test Drive Unlimited. This crash would only happen in a retail build, only when playing from a burnt disc (as opposed to running from a simulated file system over a network, which is a common console dev kit feature). It would only happen when in a full network session of eight online players for about ten minutes, and only if you were playing on one of the newer, slimline PS2s; not in any of the older models. And it was crashing inside a middleware networking library, to which we didn’t have any source code.

This bug was eventually “fixed” by switching away from that middleware library, and instead using a much slower but more stable alternative.

This “fix” makes it an unsatisfying bug about which to weave tales, because there’s no real “payoff” at the end. I never learned (and presumably never will learn) precisely what the cause of the crash was. But this was my first encounter with a really big, inscrutible bug, and I’ll always remember it for that.

Test Drive Unlimited

This story was replaced only a few years later, by a crash bug I had to track down in the Wii version of Star Wars: The Force Unleashed. This one made for a much more satisfying story, and has served me well for years.

Like the TDU crash, this one also would only occur in retail builds, and only when running from a burnt disk. About the only unexciting part of this bug is that it was a “100%” bug; it would happen reliably, at a very predictable moment, every time you followed the instructions.

Ordinarily, bugs which are easy to reproduce don’t make good war stories. But this one did, because even though there was a 100% reproducible test case, the steps in the test case were.. very particular:

- Be playing a retail build of the game, from a disc.

- It must be an EU build of the game, not a US build.

- It must be playing on a PAL50-formatted television. (Not PAL60/SECAM)

- The game language must be set to German.

- You must play through the whole game from the start to a cutscene near the end of the game, in a single sitting. (Note: The crash will not occur if you load a saved game; only if you play through the whole game from the start)

- Notice that the game crashes at a particular, reliable moment in the middle of the cutscene, if all the above conditions are true. If you abort the cutscene after it begins, but before the point where the crash happens, then there is no crash and the game continues to play normally past the cutscene.

Each time we wanted to test or verify this crash, it took our QA department folks six hours to speedrun through the game, just to reach the crash again. And of course, if you needed to modify the build they were testing, that required building a new disc image and burning physical discs for them to test with.

So from a programmer’s point of view, the interesting part of this bug was the sheer amount of time it required in order to reproduce the crash, and that it couldn’t be done with a development build, or even a dev kit so that we could attach a debugger to make debugging easier.

In the end, it took me twelve hours of debugging to find the problem, and I consider myself amazingly lucky to have found it that quickly. (And of course, it would have taken a lot longer if I hadn’t had the help and support of all the unsung heroes in our QA department!)

This one eventually turned out to be a single byte of uninitialised memory in a very complicated and opaque system. In the specific combination of steps above, everything just happened to line up exactly right such that that single uninitialised byte would happen to reliably contain the value ‘0’, at that particular moment of the cutscene. If literally any other value was in that byte then everything would be fine, but with a value of ‘0’, it would crash every single time.

Star Wars: The Force Unleashed

So that has been my “That One Bug” story for several years. But I now have a new story to tell.

It perhaps isn’t quite as exciting as the Star Wars one. The bug itself is certainly a bit less sexy. And it’s not in a Star Wars-licensed product (which always seems to perk up people’s attention). And unlike these other bugs, it’s not a crash. It doesn’t even cause any serious problems in the game; it’s just a tiny, single-frame visual glitch.

What’s more, unlike these other bugs, this one does happen in unoptimised development builds, just as much as in full retail builds. I’ve seen it happen on each of Windows, Mac, and Linux computers. I’ve seen it happen on both AMD and NVidia graphic cards. It seems to happen everywhere.

But even though it sounds like this bug couldn’t be any more boring and ordinary, I still like this story more than all those earlier stories. And I think maybe other programmers will, too.

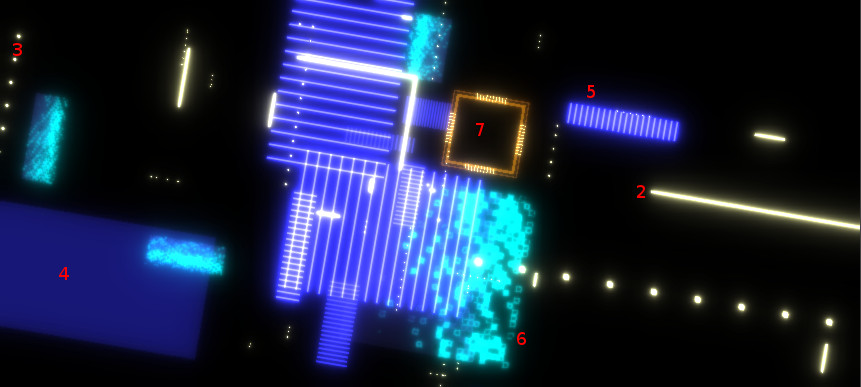

This bug is a single-frame glitch visible somewhere in the background of MMORPG Tycoon 2’s main menu. Here’s a pretty good capture of it:

The Glitch

Caveat: This gif isn’t of the real glitch; it’s me synthetically re-creating it, now that I know what was causing it.

Even though I now know what caused the glitch, I still was unable to trigger it on demand; the best I could do was make it happen perhaps once in twenty attempts. Luckily, I managed to capture a good one.

This is an unusually good view of the glitch, in part because it happens to be flashing across almost the entire screen (usually it would only flash in a small area), and in part because this gif is captured at only 30fps. When the glitch happened for real, it would appear on screen for only half as long as it does here. And finally, here it happens for one frame out of about a hundred. You know you’re going to see the glitch if you just watch for a second or two. In the game itself, it would only appear a few times per hour, on average.

The glitch seemed to be entirely random. You’d sit and stare at the menu for an hour, and maybe you wouldn’t even see it once during that time. I’ve lost count of the number of times that I thought I’d finally fixed this bug because I stopped seeing it, only to discover a week later that it was still there; that it had just been hiding for a while, and now was back again.

To an experienced game programmer, facing the combination of “extremely rare” and “random” in a bug report likely gives you a sinking feeling deep in the pit of your stomach. Bugs which are “rare” and occur at random often feel likely to be caused by a memory problem. Like we’re reading from or writing to a subtly invalid part of memory, which happens to occasionally contain unexpected, incorrect values. This type of bug is the worst kind of bug. I was desperately hoping that the glitch wasn’t this. But.. sinking feeling.

Remember this note. We’re going to come back to this topic again later on, in a way that I didn’t expect.

I’ve been trying and failing to fix this bug for at least three years. I’ve lost count of the number of times I’ve gone hunting to find it, only to fail, or to think that I’d fixed it, but for it to pop up again a few days later.

This is undoubtedly the longest-lived bug I’ve ever found and fixed, and the one which has defeated me the most times.

What follows is a story of the hunt for this bug; for this rendering glitch. I’ll try not to assume you have too much knowledge about programming, but if you’re not interested in getting into the gory technical details, please feel free to skip the rest of this post; the important point is that the bug is finally fixed!

Illustration by Rockwell Kent

The Hunt

Starting out, I knew that this glitch only seemed to occur on the game’s main menu; I had never seen it happen anywhere else, and had never heard reports from testers of seeing it anywhere else.

Since it only happened here, I suspected that the problem was likely to be in one or more of the objects that I draw while we’re at the main menu, and which don’t get drawn within the game itself. Surely one of them must be doing something illegal somehow, which is doing something bad enough to corrupt a single rendered frame, but not quite bad enough to trigger any error messages from OpenGL.

I knew that the glitch happened on many different sets of hardware and operating systems, so I assumed that this was a problem in my own code, and not a bug in a particular OS or hardware driver.

Assumption 1: The problem is something in the main menu. Assumption 2: The problem is my fault.

Spoiler: One of these assumptions was wrong.

There are currently seven different visual elements to the main menu background effect. These are:

Digital Elements

- The “Front Plate”; the grid that you can see behind the main menu in the gif above.

- The “Digital Lines”; the pale yellow lines, visible in front of the “Front Plate”.

- The “Digital Dotted Lines”; like the Digital Lines, but made of dots.

- The “Digital Solid Blocks”, which are really just giant rectangles.

- The “Digital Line Blocks”, which also are giant rectangles, but formed out of lines.

- The “Digital Rez Blocks”, which slowly appear over time, with a “rez-in” effect.

- The “Digital Chips”, which are stylised computer chips.

Element types 4-7 only exist behind the “Front Plate”. Those are not supposed to be visible while you’re actually in the main menu, only after you start the transition out. But they do exist back there behind the plate, and are being rendered all the time, even if we can’t see them. This means that they could theoretically be causing the problem, even though they aren’t visible. If I just removed them all, the glitch seemed not to happen any more, which seemed to support the idea that one or more of them was the problem. (Although with the glitch happening so infrequently, it was hard to be certain that it had really stopped)

Almost every one of these elements uses a special OpenGL shader which isn’t used for anything else in the game. For this reason, assuming that one or more of these elements were responsible for the glitch, a possible bug in their shaders was high up on my list of likely causes.

Another possibility I worried about was that element types 2-7 were occasionally being destroyed and re-created while we’re at the main menu. From what I could tell from brief glimpses of the glitch, it looked like when the glitch happened I was seeing a flash of elements that were supposed to be behind the front plate. Perhaps when the game was recreating one of those elements, it didn’t always get put into the correct place immediately? Perhaps it wasn’t until the next frame after creation that it was put in the correct spot, behind the plate, and for that single frame it was being drawn in front of it? (This is actually an extremely common bug in games)

I also considered that perhaps something bad was happening to the Front Plate itself, causing parts of it to not always be drawn. I suspected that only because it does have a highly complicated shader to make that animated grid effect, and the shader does have the ability to not draw portions of itself. Perhaps there was a bug in the code which led to it occasionally discarding bits of itself, just for a single frame? But removing all of the complexity from the shader didn’t make the glitch go away, so I was pretty sure that the problem was actually coming from elsewhere.

Finally, I also worried that it might be the rendering system getting ahead of itself; using a partially-rendered screen image as an input to a later rendering stage before it was completely drawn; that perhaps the reason why I could often “see through” that front plate was that a partially-complete main screen image had been fed into the next phase of rendering before the front plate finished drawing itself. (it’s pretty tricky to make OpenGL make a mistake like that, but I was grasping at straws and trying to think of anything that could result in a glitch like what I was seeing.)

That pretty well covers the first three years of debugging efforts. Every week or two I would become obsessed by one or another of these suspicions, and dig deeply into it. I’d investigate to see how things worked, and whether I could find something that was being done incorrectly.

Usually I couldn’t. And when I did think I’d found the problem, the glitch would hide itself, and then return a few days or weeks later, once I’d moved on to other things.

And so the bug quietly persisted.

For literal years.

Illustration by Rockwell Kent

The Clue

I would usually begin hunting for this glitch when I was working on a GUI task. I do most of my GUI layout work in a test environment which uses this same ‘digital’ background, and so when I’ve been staring at it for long periods of time I’m more likely to see the glitch a lot more frequently.

Even so, I’d still only see the glitch perhaps once or twice per day. But every once in a while, I’d see the glitch three or four times in just a few seconds. And when that happened, it would invariably trigger the start of another day or two of desperate searching for the cause.

This happened at the start of this week. And so once again, I set out with my little obsession, striving to find where the glitch was coming from.

And for the first time, I found its actual source.

This time out, I was once again investigating the idea that one or more of the digital elements might be the problem, as that seemed most likely to me. My approach to the investigation this time contained two new ideas:

- First, I changed the “Front Plate” to be a solid, bright magenta, on the theory that flickers would be more visible with it drawn in a static, bright, and vivid color, rather than as an animating mostly-dark muted color. (I feel like this helped me spot the glitches a lot more easily)

- Second, I disabled all of the digital elements but one. Instead of having a mix of all six types of digital element active at once, I would instead make six times as many of just one element type. And then I would just let the effect run, with me sitting and staring at it for a half hour or so, doing nothing except watching for glitches to happen.

I did this once for each digital element type, and the flicker never went away.

This wasn’t a big surprise; I had done similar tests a few times before. But I was doing this test again anyway, just double-checking the results.

But there was one big difference this time. In the past, once I saw a flicker I would immediately move on to test the next digital element. This time, I kept watching for the whole duration.

As a result, this time I saw something I’d never noticed before: that when I only had the Digital Chips enabled, the flicker was substantially more frequent than when I was testing any other element. All of the elements seemed to cause the flicker, but something about the presence of lots of Digital Chip elements was making the glitch occur far more frequently than otherwise. I never would have noticed that if I’d immediately moved on when the first glitch showed itself, as I had before.

The “Digital Solid Block” and “Digital Rez Block” also seemed to show more flickering than the “Digital Line”, “Digital Dotted Line”, and “Digital Line Block” elements, though still far less than the “Digital Chip”.

Now that I knew to suspect the Digital Chips, the obviously suspicious thing about them was that they were the most complicated object being drawn in the main menu; each one was being drawn in six or seven separate parts, and they had far more geometry than any of the other digital elements. So if the glitches were worse with the Digital Chips, maybe it had something to do with all those extra draw calls?

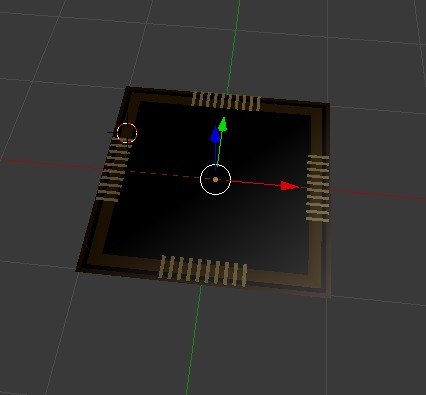

The visual models for all of these digital elements are being created in code at runtime, and all but the Digital Chip were drawn as single pieces. To test the theory that the “multiple pieces” factor was why the Digital Chip seemed to be causing more glitches than the other elements, I quickly built an identical version of the Digital Chip model in Blender, and had the game render that optimised Blender model instead of the inefficient code-built model.

A Digital Chip, in Blender

With that one change – drawing the Chip as a single model from a data file instead of from a collection of code-generated pieces – suddenly the glitches were almost entirely gone. Even more gone than the other single-piece digital elements.

This was the big clue. I was now absolutely certain that wherever the glitch was coming from, it had something to do with how we drew the Digital Chip model that had been generated in code, and how that differed from how we drew the identical Digital Chip model which had been loaded from a file.

A Minor Digression

The VectorStorm library is slightly weird.

While it was designed as a realistic engine of the sort that commercial game studios used at the time I wrote it, it’s different in one major way:

When a game studio wants to upgrade to use a new generation of rendering technology, they typically create a brand new engine, designed entirely around the new technology. The old engine is effectively thrown away, and is never used again.

VectorStorm hasn’t done that.

The VectorStorm library was never rewritten as we upgraded rendering technology. It began as a simple renderer that drew 2D vector lines using OpenGL 1.2. Over time, it was upgraded to draw solid triangles, and then real 3D models, using OpenGL 2.1, then 3.0, and now 3.3. Through all of this, it has mostly maintained backwards-compatibility. With only a few minor adjustments, games I wrote for even the earliest versions of the VectorStorm library would still work today under the modern library.

To accomplish this, VectorStorm has a number of “compatibility” interfaces. These provide support to allow games to do things which you’re not supposed to do in modern games, but which were common in older ones, so that the older games I’ve written can still work with the latest engine.

Movie poster for 'In the Heart of the Sea'

The Discovery

VectorStorm contains a bunch of utility functions for creating renderable

geometry. One such function is vsMakeSolidBox2D(), which does exactly

what it sounds like; it constructs a set of rendering commands which will draw

a filled 2D rectangle to the screen.

I noticed that most parts of the Digital Chip were being created by calling

vsMakeSolidBox2D() to make a bunch of boxes of different colors and sizes.

Which is fine; surely a commonly-used function like that, provided by the

VectorStorm library itself would be doing something sensible, right? When I

upgraded the engine from using OpenGL 2.1 to 3.0, I would have upgraded those

commonly-used utility functions to do things using the new, best-practices

methods, right?

Well, as it turns out, no. That function dates back to VectorStorm’s OpenGL 2.1 days, from before we were able to store vertex data directly on the GPU. Instead, it’s embedding raw model geometry into the rendering commands which games send to VectorStorm’s rendering engine. Which means.. it’s unexpectedly using one of VectorStorm’s compatibility interfaces for old code.

Loading models from files exported by 3D tools such as Blender, on the other hand, is something I’ve only implemented relatively recently. These file-based models are being drawn using all the modern best practices. So that would perhaps explain why a model could be causing glitches when it was created in code, but not causing glitches when loaded from a file; one was using modern rendering, and one was using an old, less-rigorously tested compatibility interface, even though both were drawing basically the same data.

Illustration by Rockwell Kent

At Long Last, the Solution

So! If the glitches go away when we draw the digital elements using the modern interface, then we can pretty clearly point at the compatibility interface as being the problem.

So all we need to do is to update vsMakeSolidBox2D() to use modern rendering

commands instead of the old compatibility ones, and the whole problem is

solved. And this was an easy task, and did actually work! After doing this, I

never saw the glitch again.

So yay! Job done!

…

The Actual Solution

Okay, yeah, that’s not a very satisfying end to the story. We didn’t figure out what was actually going wrong with the compatibility interface; just that something was wrong. We didn’t so much find the bug, as find what city the bug lived in, and then decided to simply go out of our way to avoid that whole city and everything inside it.

For all I know, there are likely to be other things elsewhere in the game which are also using that compatibility interface; we should really try to find the problem in the compatibility interface and fix that problem, rather than simply writing it off entirely.

So here’s some more information about that compatibility interface:

- When we receive a rendering command which contains embedded vertex data, VectorStorm simply pushes that explicit vertex data into a temporary buffer and immediately sends that buffer straight over to the GPU.

- We do this every time we receive an old-style “here’s some explicit vertex data” command. We make no effort to check whether this vertex data might have previously been sent to the GPU and still be accessible there; we just add the vertex data to the buffer and push it over again.

Sure, that isn’t fantastic for performance, but it’s only a compatibility interface; it’s not meant to be blazingly fast, it just needs to keep old code working until it can be brought up to date with the modern way of doing things.

A technical note about OpenGL buffer management

To try to make things efficient, we make this temporary buffer reasonably large, and reuse it for different things that draw through that old compatibility interface.

When we receive a rendering command containing vertex data, we first check if there’s space left in the buffer to hold that data. If so, we write the data into the buffer, right after the last thing we put into it, and let OpenGL know that we’ve added the data.

If there isn’t enough space left to fit the data into the buffer, we “orphan” the buffer. That is, we tell OpenGL to clear it out, and then we start filling it up again. OpenGL guarantees to us that anything which was drawing from data in the buffer before we “orphaned” it will still have access to all of that previous data, while anything we draw after orphaning the buffer will have access to the new data we now write into it. This is a really useful feature!

Right now, this buffer is one megabyte in size; that’s large enough to draw a few thousand rectangles before needing to orphan it and start again.

I’ve mentioned before that VectorStorm uses a “rendering command” system for

games to tell the engine what drawing operations to perform. Using modern

rendering commands, drawing a model created by vsMakeSolidBox2D() looks

like this:

renderQueue->SetMatrix(m_matrix); // sets where we are in space

renderQueue->BindBuffer(m_buffer); // sets our vertex data

renderQueue->TriangleListBuffer(m_indexBuffer); // draws triangles

renderQueue->ClearArrays(); // unsets our vertex data.

renderQueue->PopMatrix(); // unsets our position in space

Here, “m_buffer” and “m_indexBuffer” are references to buffers of data which are already stored on the GPU. These commands are executed using the modern rendering system.

In the old system, drawing that same model when created by vsMakeSolidBox2D()

worked like this:

renderQeuue->SetMatrix(m_matrix); // sets where we are in space

renderQueue->VertexArray(m_vertexPositions); // sets vertex positions

renderQueue->TexelArray(m_vertexTextureCoordinates); // sets vertex texture coordinates

renderQueue->TriangleListArray(m_triangleIndices); // draws triangles

renderQueue->ClearArrays(); // unsets the arrays set above

renderQueue->PopMatrix(); // unsets our position in space

Here, we’re using explicit arrays of data, instead of references to data that’s already on the GPU. This data would go through the “compatibility interface” to automatically push it over to the GPU so that it could be used during the

TriangleListArray()drawing command.

I should point out explicitly that this old system required more rendering commands to draw things than the modern system does, because we were sending different types of vertex data separately, instead of mingling the data all together into a single buffer of vertex data. It also required larger-in-memory rendering commands, because the rendering commands had to contain the vertex data itself, instead of just a tiny buffer identifier to tell the GPU which buffer we were asking it to use.

It’s also worth pointing out explicitly that vsMakeSolidBox2D() creates both

vertex position and vertex texture coordinate data, in case the “solid” box is

going to have a texture applied to it.

It was here that I became stuck again for a few hours. I was pretty sure that I was staring directly at the problem, but I simply couldn’t see it.

I was right about that.

In fact, at this point I had every piece of information necessary to solve the puzzle, and these clues have all now been presented in this post. All that remains now is to put those clues together to show what was going on, and precisely where The Glitch was coming from. (Have the programmers amongst you already figured it out? I hadn’t; I needed one more big hint, before I saw it.)

What finally got me unstuck was reducing the size of the buffer used by the compatibility layer for sending vertex data to the GPU. I found that the smaller I made the buffer, the more frequent the rendering glitches became. Once I brought it down to about 500 bytes in size, the rendering glitches became almost continuous. And this helped me to see exactly what was going wrong.

Illustration by James D. Hart

The Actual Problem

The bug was in the way we would orphan the compatibility layer’s scratch buffer when vertex data came in, if we didn’t have enough space to fit it all into the buffer. That’s absolutely a normal and correct thing to do, but since we had multiple blobs of data coming in separately, there was an edge case that we could miss. We could sometimes (albeit quite rarely) get this sequence of events:

renderQeuue->SetMatrix(m_matrix);

renderQueue->VertexArray(m_vertexPositions);

// vertex positions fit, we copy them into the GPU buffer

renderQueue->TexelArray(m_vertexTextureCoordinates);

// texture coordinates don't fit; orphan the buffer and put them in the fresh buffer.

renderQueue->TriangleListArray(m_triangleIndices);

// now draw the triangles.

renderQueue->ClearArrays();

renderQueue->PopMatrix();

Remember that orphaning a buffer only guarantees the accessibility of the orphaned data to draws which were begun before the buffer was orphaned. And in this case, this draw doesn’t start until after the buffer has been orphaned.

Often, everything happened to work out okay even when this set of events did happen. But sometimes, this led to invalid, uninitialised data being present where OpenGL was looking for those vertex positions. And when that happened, that was what we saw as The Glitch; objects drawing at the wrong sizes or wrong positions, and causing havoc for a single frame, where we had orphaned the buffer in between storing different parts of the vertex data.

Fixing this bug required me to not upload the vertex data to the GPU

immediately when the game gives it to us, but instead to hold on to it and wait

until the draw call happens (in this case, TriangleListArray()). Only at that

point do we look to see whether all the various pieces of vertex data are

going to fit into the remaining space of the buffer at the same time, and if

not, we orphan the buffer before writing any of the data into it. That way,

we’re sure that all the vertex data is actually present in the buffer

simultaneously during the draw call.

I made that fix, and even with lots of compatibility-mode models being drawn, I haven’t seen the glitch again.

This is a good book. It is worth reading.

Postscript:

Remember the note at the start of this blog post, where I talked about how this felt in my gut like a problem of invalid memory access?

It turns out that that’s exactly what this glitch was all along, just not in a way I ever considered. It wasn’t my code accessing bad CPU memory, it was my code telling the GPU to access bad GPU memory, by telling OpenGL to clear data from a buffer that was going to be used as part of a draw operation, before that draw operation was issued.

For those with an interest, this fix went into VectorStorm commit 3feced5

Epilogue

That is the whole story of my struggle with this bug. In a weird way, the days since fixing it have been unsettling for me. I’ve been missing the constant companionship of that minor flicker which has been nearby for years. A familiar itch, now more noticeable in its absence than it ever was while present.

I still catch myself watching for it from the corner of my eye. A superstitious certainty that it has tricked me once again; gone into hiding to wait for me to become too confident, too self-assured. Always lurking, ready to spring out upon me when the moment is right, when I least expect it. In my heart of hearts, I still believe that one day, perhaps soon, I will see it once more, and it will lead me on another merry, wide-ranging chase through code old and new.

But for today, I have other tasks to accomplish.